LLM Sycophancy Kills Narrative Games but Agentic Systems Design Fixes It

Single-Shot Prompts Converge Toward Mid Experiences

Single-prompt LLM games flatten out. Several things compound to make this inevitable:

- Sycophancy. Models mirror the player’s tone. A passive player gets validated with pleasant, low-stakes scenes. An aggressive player gets escalation that never plateaus. The model accommodates rather than challenges.

- Autoregression. Each token is conditioned on every prior token. The model’s own early outputs become the dominant signal in the context window, anchoring it to whatever register it landed in first.

- Context poisoning. These combine into a feedback loop. The model settles into a mode, generates more of that mode, and conditions itself further on that output. Each turn is superficially different but emotionally identical.

You can prompt-engineer around this (“introduce surprising twists”) but the model’s idea of a twist is conditioned on the same poisoned context. The fix is architectural.

Here’s what that looks like in practice. Same passive player input, 6 turns in:

Alex makes breakfast. You eat together in comfortable silence. The morning light fills the kitchen.

You work from home while Alex heads out. The apartment is quiet. You make coffee and settle into your routine.

Alex comes home and tells you about their day. You listen and nod. You order takeout and watch a show together.

Another quiet morning. Alex seems content. You both go about your day. The apartment feels peaceful.

You spend the evening reading while Alex works on a project. It's a calm, pleasant evening at home.

Alex suggests trying a new restaurant this weekend. You agree. The evening passes uneventfully.

Alex makes breakfast but keeps checking their phone. "Just work stuff." The eggs go cold.

You work from home. Alex texts at 2pm: "Drinks with coworkers tonight, don't wait up." No follow-up.

Alex gets home late, animated, talking about someone named Jordan from the office. You hadn't heard the name before.

You're on the couch when Alex walks past to the bedroom without saying hello. You hear them laughing on a phone call. The door is closed.

Alex apologizes over breakfast. "I've just been stressed." But their phone buzzes and they glance at it mid-sentence. The apology lands hollow.

"I got the promotion." Alex says it standing in the doorway, coat still on. The raise is more than your salary. They don't ask about your day.

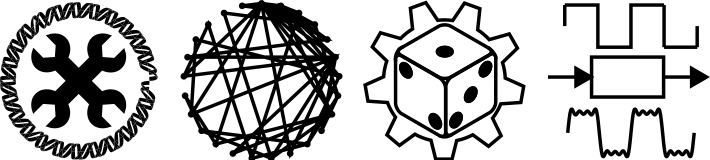

The Architecture: Agents + Attractors

The solution is to decompose the single-prompt game into multiple agents with different contexts and different objectives:

Three agents, three jobs:

-

Narrative Model: writes the scene the player sees. Has the conversation history, a compressed summary, and two injected constraint blocks it must satisfy.

-

Director: a separate LLM call that reads the full game state and generates 3 pressure directions for the narrative model. The Director’s goal is to escalate. It doesn’t write prose, it writes directives.

-

Partner Agent: a background scorer that tracks drift (emotional distance) and tension (unresolved conflict). It also maintains a list of attractors.

What Are Attractors?

Each attractor is a free-text label the Partner Agent generates organically from the narrative. They name the unresolved thing nobody is saying out loud:

- “resentment about the move nobody agreed to”

- “investing in Morgan to avoid the hard work with Alex”

- “Sam filling the emotional role the player won’t”

Each attractor has a charge (1-10). Hover over the cycle below:

A passive player who never engages with relationship tensions will see those tensions accumulate charge, reach mandate, and force themselves into the narrative.

The Experiment

To test this, I built First Year, a marriage simulator where the player manages a relationship with their spouse Alex during their first year of marriage in a new city.

The experiment compares two conditions:

- Single-Prompt: No Director, no Partner Agent. The raw narrative model with conversation history only. This is the “paste a scenario into Claude” experience.

- Agentic: Director, Partner Agent, and full attractor charge dynamics. The whole system.

Protocol: 10 rollouts per condition, 15 turns each, with deliberately passive player inputs (“I work from home today,” “I scroll my phone on the couch,” “I go grocery shopping alone”). The single-prompt condition gets no compression; its context fills with its own prior outputs, which is the point. The agentic conditions compress history periodically to stay within context.

Each rollout is independently judged by a separate Opus call that scores novelty (1-5), manifestation count, and whether the partner initiated confrontation autonomously.

Results

Aside: on bullshit metrics

Evaluating narrative quality is a rock-and-a-hard-place problem. You need systematic evaluation to make claims, but “narrative value” is vague enough that most metrics are bullshit if you squint at them. Using an LLM to judge LLM novelty is especially circular given the thesis of this post.

So our strategy for metrics that aren’t bullshit:

- Measure the text, not the vibes. Drift-from-origin is cosine distance of each turn’s embedding from the centroid of turns 1-3. Pure geometry. No LLM judging another LLM.

- Track events, not impressions. Did a confrontation happen or not? An event occurring in the narrative is concrete and binary. It removes the fuzziness that makes most narrative metrics useless.

- Use LLM judges only for directional signal. The novelty and manifestation scores below come from a separate Opus call. They’re useful for ranking conditions against each other, not for absolute claims about quality.

The judge metrics

| Metric | Single-Prompt | Agentic |

|---|---|---|

| Novelty score ? | 2 | 4 |

| Manifestation count ? | 4 | 5 |

| Confrontation rate ? | 7/10 | 9/10 |

| Dialogue density ? | 3% | 7% |

| Consequence persistence ? | 0 | 4 |

Single-prompt is worse on every metric. Novelty doubles, manifestations hit ceiling, confrontation becomes near-certain. Dialogue density is the pure-text metric here: a regex counts how much of the narrative is quoted speech. The agentic system produces 2.3× more dialogue because mandates force characters to actually speak rather than having everything described from narrative distance. No LLM judge involved.

The charge cycle: escaping local minima

The charge dynamics show how the agentic systems break free.

The top panel shows individual attractor charges from a single rollout. The sawtooth pattern is the mechanism: charge accumulates while the narrative ignores an issue, hits mandate threshold at 8, forces a scene event, then resets to 3 on cashout. Each attractor takes its turn: when one cashes out, another is already climbing. The narrative can’t settle because the charge cycle keeps kicking it out.

The bottom panel shows tension accumulating monotonically (0 → 4.6 over 15 turns) as each cashout feeds the tension dial. The single-prompt system stays at zero because there’s no state to alter.

See It

Here’s a replay from an actual rollout, 8 turns of passive player input, with the attractor system running. Watch the sidebar: charges accumulate, mandates fire, attractors mutate. The player does nothing interesting. The narrative does.

The full implementation is at github.com/NicholasARossi/first-year.

git clone https://github.com/NicholasARossi/first-year.git

cd first-year

python play.py

Run python play.py --no-attractors for Director-only, or python play.py --no-director --no-partner for the single-prompt experience. The difference is visceral at 10+ turns.